Principles of computation in neural networks, real and artificial

Thought-provoking connections have emerged between artificial neural networks and biological neural networks in the brain

Thought-provoking connections have emerged between (deep) artificial neural networks and biological neural networks in the brain. Convolutional neural networks (CNNs) — artificial neural networks specialized in visual classification tasks¹ — have been shown to exhibit a certain structure with training that is remarkably similar to the organization of visual cortex in the human brain². Recurrent neural networks — artificial networks specialized in learning sequences over time — have become useful in approximating the behavior of neurons in frontal cortex³, the part of the brain that serves as substrate for the most complex behaviors that we defend as uniquely human (such as planning, social interactions, action selection)⁴. The parallels between real and artificial are striking enough for researchers to be feverishly engaged in trying to bridge (deep) artifical networks and the brain⁵, especially uncovering how backpropagation — the method by which deep artificial neural networks are able to learn — could be implemented in the brain.

Instead of getting into the nitty-gritty of how particular learning algorithms could be implemented in the brain, it might help to take a step back and contemplate the big picture: can we discern fundamental computational principles by which neural networks operate in the brain? By connecting individual brushstrokes into meaningful wholes, this article will strive to generate insight into how things might fit together, across disciplinary boundaries. Principles that we find operating in the brain might offer a window onto endowing artificial agents with more humanlike capabilities. Understanding similarities between real and artificial networks also becomes increasingly important as we develop ways of integrating the two in neuroprostheses that augment or supplement our capabilities. Ultimately, drawing on insights from neuroscience and artificial intelligence, perhaps we can piece all the puzzle pieces together and lift some of the mystery of a highly complex human achievement: how a sequence of musical notes as elusive as Wagner’s Tristan chord can have such profound effects on our psyche.

Fundamental constraints

In order to understand why the brain functions in the way it does, it may be useful to understand the constraints under which it must operate. Perhaps the most fundamental biological constraint, and one of the brain’s distinguishing and (so far) unparalleled features, is its economical power consumption. The brain can perform all the remarkable feats of everyday life at a relatively lower power consumption compared to non-biological computers⁶. It is several orders of magnitude more power efficient than silicon chips⁷.

To be clear, constraints such as economical power consumption are not meant as a luxury, in the way we think of buying more energy-efficient light bulbs after already having been given the gift of electrical light at the turn of a switch. Rather, the brain has had to cope with energy-efficiency while inventing light. If we had put energy-efficiency first in our economy, we may indeed have come up with an infrastructure altogether different to the one we have built. As humans we like to think of ourselves as independent, but biologically we also fit and grow within the confines of an ecological niche⁸: the very principles of natural selection govern the evolution of our genome and influence profoundly how we behave⁹. Likewise, the brain should not be thought without its natural constraints.

There are good evolutionary reasons for why it would be important to preserve energy, and taking this constraint into account may help us understand why the neural code has been written the way it is. Taking energy efficiency into account as a fundamental constraint at the level of algorithmic design and implementation may yet uncover new learning algorithms, beyond (computation-heavy) backpropagation. Developing a pathway by which information can be transmitted economically, for instance, can help convey essential sensory information to multiple brain systems at low cost (at the expense of accuracy or information carrying capacity)¹⁰. There is evidence that the encoding of information in the brain (via ‘spikes’, the currency for information exchange between neurons) is set up in an energy-efficient way¹¹ — using the same currency (spikes, distributed uniformly in time) to convey information in artificial neural networks results in a 38-fold increase in energy-efficiency compared to conventional neural networks¹². Neural coding schemes which may appear peculiar on their own (such as on-off cells in visual cortex¹³) could simply arise from energy minimization constraints¹⁴.

Unlike servers which you can conveniently scale up in the ‘cloud’ adding several hangars of computation power to your problem, the brain has to confine itself to the relatively small space inside our heads. The neural material with which to fill it also seems to be limited from childhood (even in the hippocampus where neurogenesis, the emergence of new neurons, was thought possible¹⁵, it actually appears to basically halt after childhood¹⁶). This also means that wiring length is fundamentally limited, and therefore, costly¹⁷— we can’t just lay another cable underneath the Atlantic to let us daydream at 5G. What the brain has done instead, is to move continents together.

One way to operate within this constraint is to fold into and over oneself, maximizing surface area. Also, units processing similar types of information are mapped closer together in space, resulting in topographic maps: ordered representations in the brain that map, say, neighboring parts of the face to neighboring parts of sensory cortex. Topographic maps also exist in other sensory systems, such as motor cortex and auditory cortex¹⁸. It is unclear, however, if this extends to more evolutionarily ancient and functionally basic units deeper in the brain (such as the brainstem), or more evolutionarily recent ones (such as frontal cortex). Maps in other areas may be more abstract though, less amenable to 20th century neuroscientific tools. Instead of concrete physical properties, these maps may represent more abstract quantities such as probabilities which map out anticipated rewards associated with different actions¹⁹.

Another fundamental constraint is imposed by the environment itself in which we exist, the natural world with its requirements and affordances²⁰. Our biological shape into which we have evolved presents us with unique computational challenges, imposed by the simple fact that we are walking on two legs. The constraints imposed by our natural environment thus carves out the sandbox of our life, with its boundaries against the skies and the seas. In addition to these global constraints, the brain may be optimizing particular aspects of behavior according to more narrow constraints (i.e. minimizing surprise, effectively allocation attention)²¹.

Constraints may also be exploited for the purposes of learning. Relying on our surroundings to provide particular signals reduces the need to explicitly encode everything in the genome: language attributes may be learned through exposure during childhood²²(without the need for explicitly encoding everything à la Chomsky’s ‘innate knowledge’²³), much like the way chicks are ‘imprinted’ to follow their mother during critical periods in their development²⁴. Natural scenes with their particular regularities exhibit certain statistics that are confined to a subset within the vast space of possible combinations. Effective functioning of deep neural networks may indeed also be reliant on this restriction in parameter space achieved by the regularities present in our natural world²⁵.

Hierarchically stacked modules

What emerges from cognitive science is that the brain is made up of modules, specialized in processing particular types of information. There are distinct areas (cortices) in the brain that specialize in visual, auditory, somatosensory, motor, and spatial information processing, task planning and attention regulation. Some of these, such as sensory cortices, are more limited in the type of information they process: it is either visual or auditory or somatosensory etc. Others, such as frontal cortex, sit higher up the hierarchy in that they can draw on visual and auditory as well as other sensory information and combine it all in task planning and attention regulation. This scheme of hierarchically stacking specialized processing modules may play an important role in building more versatile intelligent agents.

This is not to be thought of as a command hierarchy (a single command center sitting on top, steering all that is below); indeed, the brain’s attention mechanism is distributed across a wide network²⁶ and it is unclear to what degree command can be thought as centralized. Rather, it is to be thought of as a hierarchy in information complexity: smaller specialized bits composed into larger bytes as we go up the hierarchy.

As we zoom in to look inside one of these specialized modules, the visual cortex, we find cells specialized in detecting edges of light in various orientations. These cells have received information about light intensity from so called retinal ganglion cells, each of which respond to light at a particular location along one of this edges. Retinal ganglion cells, in turn, integrate information about light intensity that they receive from various rods and cones — cells specialized in detecting light at particular wavelengths (important for low light and color vision, respectively). At the other end, cells responding to particular edges of light might be combined to make out more complex shapes, all the way up to ‘face cells’ (cells specialized in face detection).

In the same way, artificial neural networks such as CNNs build up from individual units covering a certain limited part of the visual field (input image), over units that receive (and pool) information from multiple adjacent visual fields, to the last layer which can represent more abstract shapes that span across the whole visual space (pixel space). Mathematically also, CNNs involve gathering information from a subset of inputs, passing it through a stereotypical processing unit (a so called GLM²⁷, made up of a linear and a non-linear transformation) and pooling over the outputs (taking the maximum over the activations) before passing it to the next unit one layer higher up²⁸.

Information at the next level is obtained by integrating over information from all the individual units a layer below. In this way, information complexity is increased as we go up the hierarchy. At each level, the story repeats itself — are we just moving through different levels of a fractal recursion²⁹? What if we zoom all the way out, from cells to the I?

Our very thinking itself seems to show the same structure: complex thought such as itinerary planning in the London tube system also functions in a hierarchical fashion³⁰. Other types of abstract thinking may also function analogous to more fundamental computational elements, for example prospective planning may be akin to path-finding³¹. It remains to be seen though, whether (and how) this is reflected in neural circuit design, figuring out how it is encoded may yet require some more (equally) abstract thinking. Thus, abstract behavior may reflect the laws of the substrate from which it emerges. Abstract thinking may assume the same structure as the information with which it is built: “you are what you eat” .

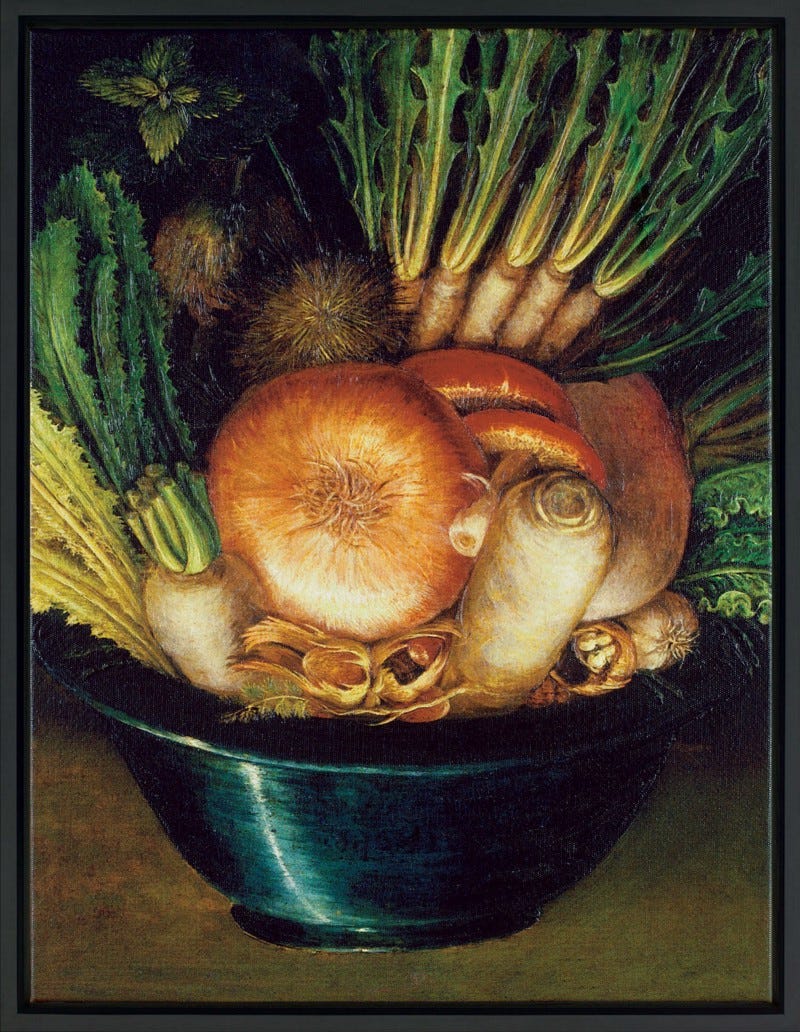

The missing piece, consciousness, may emerge naturally. A measure callled ‘integrated information’ has been proposed to quantify consciousness³². This measure is maximal when both functional specialization and functional integration are high. According to this theory, when a system becomes less integrated (when information propagates less widely through a system of interconnected areas) or when there is loss of specialization (activity is all the same throughout the entire system), consciousness fades. Integrated information was found to be decreased during sleep³³ and under anesthesia³⁴, when consciousness is low. So, take a bowlful of specialized units, wire them up appropriately, and you could have ‘consciousness’ emerge at the top of the hierarchy.

Knowledge recycling

Despite the specialization, a lot of machinery is actually being re-used to solve a variety of tasks³⁵(also think energy & space constraints). Whether you are a taxi driver trying to find your way in the city or a birdwatcher on the hunt for a new species, you need neurons to be able to detect edges. This is predicated on the fact that there are edges in our natural world. Even if on the surface two pictures may seem as different as those of Trafalgar Square and the pigeons on top of admiral Nelson, at a deeper level they may exhibit some of the same statistics that is common to all natural images (and much unlike white noise, for instance; think natural world constraint). Once learned, these properties can be applied in detecting new images/concepts more easily.

Trained on natural images, the response characteristics of artificial neurons in CNNs come to closely resemble that of visual cortex, as alluded to in the introduction. What is more, artificial networks trained to approximate responses of retinal ganglion cells (output cells, at the last layer of complexity in the eye) reproduce various properties of cells that lie earlier in the visual hierarchy, even though these artificial networks were not explicitly trained to approximate these responses³⁶. One such property shows cells adapting to the signal properties they are faced with (contrast adaptation), ensuring efficient encoding. Owing to a common constraint (natural images), similar organization (hierarchical), and similar computation (non-linear transformation), similar computational properties seem to emerge in real and artificial networks.

These computational properties are a mirror-image to the affordances of our environment. Once we learned that contrast can vary, we can have our cells readily modulate their responses so as to counteract that change and extract the essence of the image. Artificial networks have not quite picked up on all relevant regularities though, as they keep being easily fooled by adversarial attacks (two images that look identical to humans can produce catastrophically different predictions in artificial networks³⁷). Once correctly in place though, these computational properties can help us not only perceive, but anticipate our natural world.

At a higher level of thought, this transcends into what psychologists call ‘Gestalt laws’³⁸— certain principles by which we expect the world to function. Infants begin using their senses to build a coherent model of the world from the moment they are born³⁹,⁴⁰. When this ‘world model’ is violated (i.e. lines don’t continue where we expected them to, law of closure), babies are surprised⁴¹. As we encounter these principles in early life, they mold the neural trajectories in their image. Later on, we don’t need to re-learn these things, we can let thoughts flow along established pathways. Exploiting commonalities will also help us with our goal of minimizing energy expenditure: we may carve out creeks and rivers as we add to our experiences, but we do not need to carve out the Amazonas anew.

An analogous process has emerged in training deep neural networks, known as ‘transfer learning’⁴²: a network trained on hundreds of thousands of images of all sorts may help you solve a very particular classification task (Trafalgar vs. pigeons), maybe even in a seemingly distant domain. In this way, one may exploit previous learnings in solving new tasks. At some point it breaks down, though, as the skills get more specific. How to effectively store specific knowledge without obliterating previous learnings is a matter of ongoing research. It may require fine-tuning learning algorithms⁴³ or adding explicit memory components⁴⁴.

Neural networks (and robots) still have not learned to properly represent higher-order computational properties such as ‘world models’. They are not yet able to learn as quickly (i.e. with few examples, one-shot learning) or as independently (the feats CNNs achieve as described above are achieve by supervised, not unsupervised learning where properties are learned without labelled examples) as human babies⁴⁵. There are diverging opinions on how to endow artificial agents with more human-like capabilities, but they generally fall along a spectrum of how much knowledge should be specified (think symbolic AI; IBM’s Watson) vs. how much will fall out of a good learning algorithm applied to the right task (i.e. the idea of letting artificial agents discover common sense through video⁴⁶,⁴⁷). This mirrors the debate of innate vs. learned (nature vs. nurture)⁴⁸.

Circuit specialization

Over a longer time horizon (generations), these principles may become baked into the very neural architecture itself; biological systems may come to exhibit certain circuit specializations which nature has judged useful in letting human agents survive generation after generation. In this way, we may come to observe motifs specialized in vision tasks as implemented in visual cortex (analogous to convolutional neural networks for images), or face processing in the temporal lobe (loosely analogous to capsule networks exploiting more of the proportions found in faces), sequential planning in frontal cortex (analogous to recurrent neural networks for learning sequential information), judging relations between objects in frontal cortex (analogous to relational neural networks for better discerning relational properties), dopamine modulation in the basal ganglia (analogous to reinforcement learning), or other functions for which no analogous artificial networks have yet been developed like the rapid continuous processing that the cerebellum engages in (optimized for rapidly correcting errors while movements are being carried out).

In the same way, we are now starting to bake neural network algorithms onto silicon chips (i.e. Tensor Processing Units). This becomes increasingly useful as we strive towards higher performance and lower computational time. As we build more and more of our infrastructure on top of data- and therefore power-hungry systems (AI cloud systems/blockchain), it may be increasingly important to take energy efficiency into account. We may thus yet turn to more biologically realistic circuit designs in the future⁴⁹.

Given the basic machinery, further specialization may occur at the top of the pyramid dependent on our daily needs, the skills that we hone in particular professions. The brain is highly plastic in the sense that it can adjust to current demands. With repeated stimulation to the fingertips, for instance, the region in the brain representing perception in the fingertips will expand⁵⁰. If you re-wire the brain by sending visual instead of auditory information to auditory cortex, the brain remodels auditory cortex into a visually responsive area complete with typical visual pathways and all⁵¹. And finally, after (spinal cord) injury the brain can learn to re-route information through new connections⁵².

In this way, more processing power may be delegated to spatial reasoning in taxi drivers who have to find their way through London’s maze, leading to an enlarged hippocampus (the part of the brain engaged in spatial reasoning)⁵³. This may be done by stacking together computational modules to create specialized processing units for say sequential relational reasoning (combining relational with recurrent networks), or more broadly, by combining different types of learning mechanisms (supervised, unsupervised, or reinforcement learning). Looking over larger time horizons, more highly specialized circuits may have emerged that encode a specific bias towards the things we expect to encounter in the world with high frequency (i.e. face-processing units⁵⁴, and similar units for other ‘proto-concepts’⁵⁵).

Neural toolsets

So how do we get from here to representing complex emotions, ornate images and mysterious harmonies, keeping our constraints in mind? We need to organize information into meaningful units, meaningful lego blocks with which to build our castle. The more suited these lego blocks are to building our particular types of castles, the less we need to re-shape and re-model, the less we need to concern ourselves with re-writing bits and pieces in low-level languages (C++) rather than building empires with high-level languages (Tensorflow or PyTorch). In Einstein’s words, we need our blocks as simple as possible, but not more simple, especially if we are concerned about efficient energy usage.

So what is this neural toolset? It is Darwin’s basic emotions (expressions that are conserved across species)⁵⁶, Gábor functions in vision (complex functions that are a good representation of higher-level visual neurons’ tuning curves)⁵⁷, motor primitives (elementary movement trajectories that are elicited by stimulating single neurons in motor cortex)⁵⁸, probability sets for relations between objects (grid cells potentially encoding relations between objects/concepts in the world, effectively yielding an ‘adjacency matrix’ which can be used for trajectory planning⁵⁹,⁶⁰, or perhaps even for analogical reasoning (see below)…), and task sets (task trajectories with which to represent decision making in complex tasks)⁶¹. In math speak, this problem translates to finding the minimum set of basis vectors with which to span the task space⁶²; once found, elaborate structures can be built up with linear combinations of these vectors. These efficient representations may indeed naturally emerge when training neural networks: deep neural networks were found to go through two stages, one involving learning the properties of the data, and the second compressing the learned knowledge into its most efficient form⁶³.

At yet higher levels of thinking/abstraction (i.e. at the level of complex information immediately available for decision-making), another word for neural toolset may be what psychologists refer to as ‘chunking’: routines that are established from stringing together individual items (i.e. going from thinking about the movement of each finger in playing a chord to thinking in whole phrases by the time of performing the piece at a recital Horowitz style). Over time, this may lead to the emergence of explicit priors/biases/heuristics (in addition to the implicit priors baked into the very neural architecture itself) which help guide our actions and reactions without the need for much (energy-intensive) conscious thought. In this way, neural toolsets facilitate knowledge recycling.

In the visual domain, this chunking may become evident as certain prototypical imagery which may be shared across individuals (in other words: archetypes⁶⁴ — possibly genetically encoded or, more likely, emerging through the statistical constraints imposed by our shared natural surroundings). These images may then be used in constructing more complex concepts or image sequences unfolding over time, for example in the form of metaphors. Our language is ripe with metaphorical expressions: “heart of a problem”, “fruit of the labor”, “shoulder a responsibility”⁶⁵, which can be readily employed as templates that communicate complex meaning.

These concepts can then be used to engage in analogical reasoning⁶⁶. This means we can start exploiting relationships between existing concepts to infer how a new concept might behave, i.e. knowing the relation between brother and father, we could make sense of how ‘sister’ relates to ‘mother’. Going one step further, recognizing that somebody is ‘like a father’ or ‘like a brother’ to someone means we can readily understand the meaning of a new concept in terms of the previously established relationships. Artificial neural networks trained on natural language tasks have been demonstrated to be capable of such analogical reasoning. Specifying the distance between the vector representations of ‘Einstein’ and ‘scientist’, we can ask what the network what the analogous professions of ‘Mozart’ and ‘Picasso’ are likely to be: we get ‘violinist’ and ‘painter’, respectively⁶⁷.

Knowledge Webs

On the substrate level, biological networks will tend to organize similar concepts in a way that they are also physically close, abstract similarity in concept space manifesting in physical proximity. This is exemplified by what is known as the Hebbian law: what fires together wires together — neurons that tend to be activated together or in close succession will establish connections with each other, while those that don’t will lose them (will have their connections pruned)⁶⁸. Over time, network hubs will emerge, cross-roads which lie at the intersection of commonly used information pathways⁶⁹. All of this again will help us drive down energy costs, as unnecessary connections are discarded and information pathways become optimally connected. Artificial networks currently do not implement Hebbian learning, possibly because no effective mechanism has yet been found to carry it out in a computationally efficient manner.

Moving up yet another level in complexity, this wiring by co-occurrence maps unto the process by which we string together sensory experiences, across modalities, across time, across space. When that particular note of perfume conjures up the image of our significant other, together with the restaurant we first met in, the feeling of excitement that permeated us as she walked in, the red color of her dress, the roses that we bought, the photosynthesis reaction by which that rose functions, and all the way up again to the abstract concept of ‘I love you’ — all triggered by the initial smell⁷⁰. One item in the chain will trigger the activation of all associated concepts, like the waves propagating outward from where we throw a stone into the water. Older versions of artificial neural networks known as Hopfield networks exemplify this property, which one may also call ‘pattern completion’: one is able to recover the whole by feeding in part of the information⁷¹. In the human brain, this property may be mediated by the hippocampus which is involved in spatial reasoning, and perhaps more generally in providing a universal (probabilistic) coordinate system for the brain.

Wagner’s Tristan & Isolde demystified

So how does the mysterious Wagnerian Tristan theme manage to move us so profoundly? Individual notes produced by the various instruments get detected by sensors in the ear and parsed into their underlying frequency components. These bits will be built up into larger bytes representing certain notes. At a higher level of abstraction, notes will be chunked into larger units such as chords (i.e. Tristan chord). Chords may in turn be used in defining fundamental building blocks ideally suited to tiling the musical universe: harmonies. At the same time, visual impressions get parsed by their individual edges and built up into representations of heroic-tragic imagery. All of these activations get funnelled along the neural grooves that have been carved out by experience, flowing through all their individual paths and through previously established intersections. By the virtue of being activated together, these images and harmonies get bound together into coherent experiences, literally wired together. Chords previously associated with particular images and feelings will re-activate those memories, conjuring up images of heroic deeds and innocent love together with the heart-wrenching feeling of imminent death. The more ambivalent (in German: “mehrdeutig”) the harmonies, the more associations are possible. In the end, no chord or note or frequency exists on its own, no matter how self-sufficient it may appear — the more different vector combinations, “imaginary lines of projection”, get activated together in the high-dimensional space that is our neural canvas, the more profound the experience. All of the levels in the representational hierarchy exist together; zooming out from the subconscious into the conscious, we suddenly end up with one word that we whisper to our companion (real or artificial), one word that encompasses it all: beautiful.

Conclusion

In conclusion, I think much can and remains to be learned from the functioning of constraints, both for understanding neural systems and for artificial networks: under what set of conditions will these networks produce certain results, and how are these conditions related to what we find in the natural world (the kind of tasks we need our brains/want our artificial networks to solve)? From this we may glean insights into why neural systems look the way they do. We can test our hypotheses in artificial networks, see if they develop a fruit-face under certain conditions (much like stem cells, that can develop along particular paths if prodded right). In this sense, it may be possible to teach artificial networks new tricks without recreating a full-fledged artificial humanoid (as the concept of embodiment might suggest⁷²,⁷³) — much like we did not have to copy birds 1:1 to create modern planes.

I have sketched out a picture of the brain as hierarchically stacked specialized modules. As we go on to develop more capable artificial agents, we may need to stack more of these specialized units together in intelligent ways⁷⁴. We may need to combine quite fundamental (unsupervised) learning units with more specialized (supervised) learning units. Yet for all of these to work, we still need to tweak all learning algorithms (unsupervised and reinforcement learning especially), or invent new ones.

It is important to start recycling knowledge. Yet it is far from trivial to decide which knowledge should be kept and which discarded, which should be trickled down into specialized module or even circuit design and which can be easily learned from the environment with a few examples. It may ultimately come down to striking the right balance between this.

If we do all of this right, we may see neural toolsets and knowledge webs emerge, and at some point perhaps, an artificial Richard Wagner.

P.S.:

What to make of the famous Tristan chord, the most mysterious chord in musical history? Though nobody can really put his finger on it, many will describe it as expressing a feeling of intense, perhaps desperate longing. Musical theorists are desperate because they cannot quite categorize it by harmony, the chord is capable of being resolved into several different harmonies, it is multi-valent (“mehrdeutig”). The solution and intended effect may lie in their very desperation itself. The chord remains unresolved, longing to be resolved throughout the remainder of the piece. Thereby, the chord may function as the musical embodiment of an emotional state that serves as a canvas upon which the rest of the piece will unfold. Music may just be the transliteration of impressions and emotions into musical lego blocks, cunningly stacked together.

If you enjoyed this piece, I appreciate your support 👏🏻

Acknowledgments

Special thanks to Andreas Márton for a lifetime of inspirations, and to Moritz Mueller-Freitag for the fruitful discussions that prompted me to write this piece and generous help in editing.